Building Software with AI Agents

Strengthening Loops and the Return of Judgment

Software development with AI agents does not feel like automation.

It feels closer to apprenticeship.

For decades the dominant mental model of software engineering was linear: plan the system, implement the design, test it, ship it. Improvements came from better planning, more detailed specifications, stronger processes designed to reduce mistakes before they happened.

AI-assisted development changes that shape.

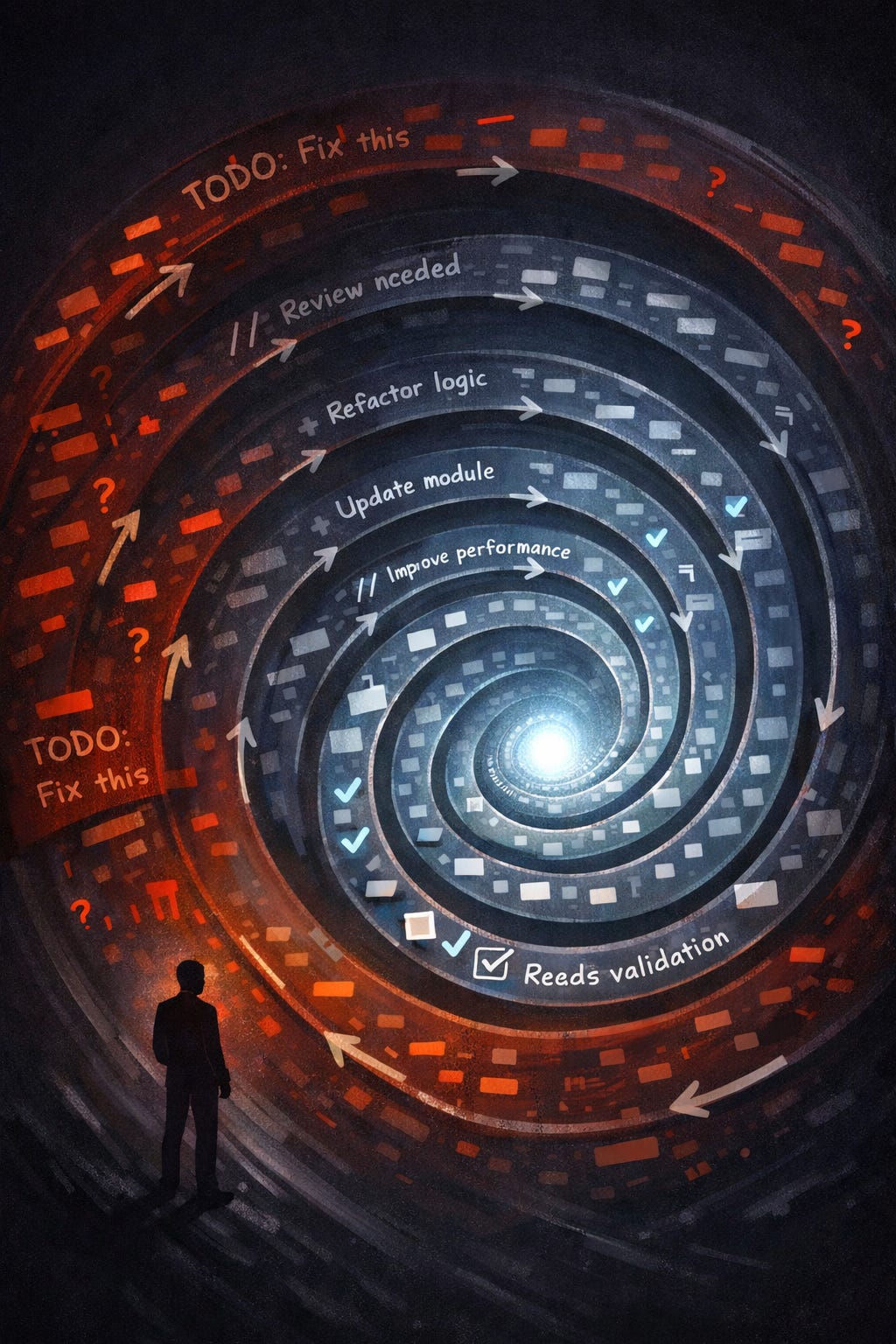

The process becomes cyclic rather than linear. Work proceeds not through perfect plans but through repeated tightening. A rough implementation appears quickly. It is reviewed, criticized, adjusted, reviewed again. Each pass improves something small. Each cycle locks the gain before the next begins.

The shape of the work becomes a strengthening loop.

What matters is not the perfection of the first attempt but the speed and honesty of the next review. The faster the loop closes, the faster the system improves. Intelligence accumulates inside the loop itself. Each iteration carries forward what was learned in the previous one.

This pattern appears at every scale.

A function is written roughly, then improved through review. A feature appears in skeletal form, then stabilizes through repeated passes against real input. An architecture emerges from experiments and corrections rather than from a perfect initial blueprint.

The loop is always the same:

Start rough.

Review critically.

Tighten what is weak.

Review again.

Commit the improvement before moving forward.

The output of one cycle becomes the input to the next.

Over time the loop strengthens itself.

The Role of the Human

The surprising shift is not that AI writes code.

The shift is that the human role becomes clearer.

Agents are capable of exploration, generation, and analysis across large surfaces of code. They can implement modules, run tests, propose refactors, and search for patterns across thousands of files. They are fast, tireless, and willing to try many approaches.

But the loop does not run without judgment.

The human provides the elements that cannot be automated.

Vision.

Taste.

Sequencing.

The stop signal.

Vision answers the question of what the system should ultimately feel like. Not its functions, but its character—how simple it should remain, how predictable, how resilient under pressure.

Taste filters output. Sometimes the most valuable instruction is simply “no.” Removing a direction early saves far more effort than perfecting it later.

Sequencing decides which problems deserve attention first. In complex systems the order of improvements matters more than the improvements themselves.

And the stop signal prevents endless refinement. Every system eventually reaches the point where it is good enough to move forward.

Agents expand the surface area of what can be explored. Humans determine which explorations matter.

The loop works because these roles remain distinct.

The Return of Empirical Development

AI-assisted development quietly returns software engineering to an older scientific pattern.

Observation before theory.

The first step in many projects is not design but diagnosis. Systems are run against real input. Behavior is observed. Failures appear in logs and traces. Only after seeing how the system behaves do architectural documents emerge.

Architecture becomes a response to reality rather than a prediction of it.

This inversion matters.

Specifications written before observation often solve imagined problems. Documentation written after observation captures constraints that actually exist.

Agents accelerate this empirical process. They can audit codebases, analyze logs, trace dependencies, and compare behavior against competitor systems in minutes. The human synthesizes the findings and decides how the architecture should change.

Information gathering becomes parallel. Decision making remains singular.

Parallel Exploration, Singular Direction

Agents are cheap for exploration.

Multiple agents can research alternative approaches simultaneously. One studies an external library. Another analyzes the codebase for patterns. A third proposes architectural options. Their findings converge into a shared view of the problem.

But direction does not emerge automatically from this exploration.

The human synthesizes the results and chooses the path forward.

This pattern repeats constantly: parallel discovery followed by sequential decision.

Agents widen the search space. Humans define the trajectory.

The Discipline of Real Input

One pattern consistently separates productive projects from drifting ones.

Everything is tested against real input as early as possible.

Synthetic tests are valuable, but they rarely expose the edge cases that real-world data reveals. Systems often behave correctly in isolation while failing in the environments they were built for.

Running the same real input repeatedly creates a stable benchmark. Every change to the system can be measured against that constant scenario. Improvements become visible. Regressions become undeniable.

The first milestone of a system is never architectural purity.

It is always the same question:

Does it produce meaningful output on real input?

Only after that milestone does refinement begin.

The Review Ritual

AI accelerates implementation so dramatically that quality control becomes the limiting factor.

The solution is ritualized review.

After each implementation block, a structured review examines the system from multiple angles: simplification opportunities, missing tests, security vulnerabilities, architectural inconsistencies, user experience flaws.

These reviews are not occasional audits. They are the rhythm of the loop itself.

The ritual exists because software almost always looks correct at first glance. Bugs hide in assumptions, dead code, untested branches, and overlooked integrations. A consistent review process forces those assumptions into the open.

Over time the ritual trains the system—and the developers working with it—to expect scrutiny.

Structural Solutions Over Prompt Engineering

A recurring lesson in AI-assisted development is the difference between reasoning problems and information problems.

When an AI system produces incorrect results, the instinct is often to refine the prompt or adjust the instructions.

In many cases the real issue is missing information.

If a model is guessing version numbers, the solution is not better prompting. The solution is to supply the verified version number directly. If a model struggles to locate definitions in a codebase, deterministic search can retrieve them precisely.

Structural fixes eliminate entire classes of mistakes permanently.

Prompt engineering tends to reduce error probability rather than removing the source of error entirely.

Reliable systems move as much work as possible into deterministic analysis before asking the model to interpret the results.

Evidence first. Reasoning second.

Human Judgment as Executable Criteria

Perhaps the most powerful pattern is encoding judgment itself into evaluation systems.

Developers define evaluation scenarios that represent desired behavior. Each scenario includes a scoring rubric describing what quality looks like. Agents run the system against these scenarios, analyze the scores, adjust the implementation, and run the evaluation again.

The loop becomes partially automated.

Human taste remains central but shifts into a different form. Instead of reviewing every iteration manually, the human defines the evaluation framework once. Agents replay those criteria across many iterations.

Judgment becomes executable.

Quality improvements become measurable rather than subjective.

Architecture That Supports Iteration

The architectures that work best with AI-assisted development share several characteristics.

They rely on deterministic systems where possible, leaving the model to handle interpretation rather than discovery. They pass structured data between components instead of raw text, allowing modules to be tested independently. And they isolate new capabilities into separate modules rather than embedding them inside existing ones.

These principles make systems easier for both humans and agents to reason about.

Modular boundaries become cognitive boundaries.

Each module can be understood, tested, replaced, or improved without destabilizing the entire system.

The Persistence of Memory

One subtle constraint of AI development is context.

Agent sessions are temporary. Conversations reach limits. Information disappears unless it is preserved.

Persistent documents become the long-term memory of the system.

Architecture descriptions, execution plans, design notes, and structured summaries carry decisions across sessions and across time. They ensure that insights discovered in one iteration remain available to the next.

Writing things down is not documentation in the traditional sense.

It is operational resilience.

The Pattern Beneath the Tactics

Across projects, technologies, and teams, the same pattern appears again and again.

Software improves through strengthening loops.

Agents accelerate the loop by expanding what can be explored and implemented quickly. Humans strengthen the loop by applying judgment at the moments where direction matters.

The result is not automated development.

It is amplified development.

The system evolves through cycles of generation, criticism, correction, and consolidation. Each iteration leaves the system slightly stronger than before.

Quality does not emerge from perfect planning.

It emerges from disciplined iteration guided by human taste.

The developer is no longer the sole builder of the system.

But the developer remains the one responsible for deciding what the system should become.